Demystifying LLM Safety: Progress, Challenges, and Recommended Practices

Demystifying LLM Safety: Progress, Challenges, and Recommended Practices

Enterprise adoption of AI agents is accelerating, and with that comes a crucial question: How can corporations confidently deploy LLMs and agents with sensitive data while maintaining robust security? The answer lies in understanding the remarkable progress that was made, and the strategic approaches that make safe deployment achievable today.

This blog examines where LLM safety stands today and demonstrates why enterprises can move forward with confidence. Since the creation of LLMs, the industry has developed a sophisticated safety stack that makes modern models significantly safer and more reliable than their predecessors. While challenges remain (as they do in any evolving technology) the combination of proven techniques, emerging solutions, and best practices enables organizations to deploy AI systems securely. For AI professionals building with or researching LLMs, understanding both our progress and the path forward is essential for successful implementation.

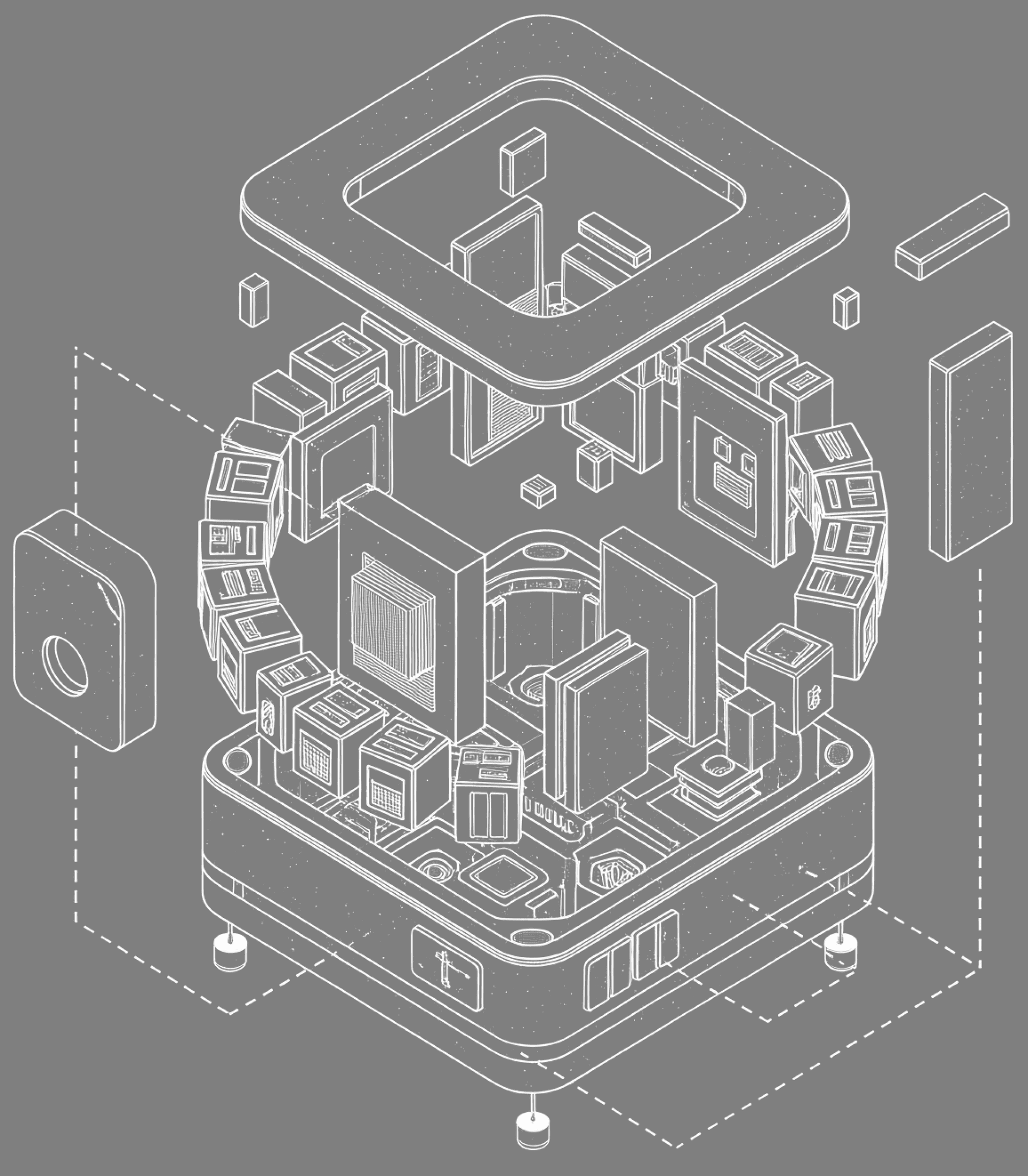

The Modern Safety Stack

Today's LLM safety isn't a single technique but a layered defense-in-depth approach spanning the entire model lifecycle.

Pre-training: Starting with Better Data

Safety begins with data filtering to remove problematic content before training. Modern filtering removes most problematic material while preserving the model's ability to discuss sensitive topics appropriately.

RLHF and Constitutional AI

Reinforcement Learning from Human Feedback (RLHF) is the cornerstone of modern LLM safety. After pre-training, models learn from human preferences between outputs, including refusing harmful requests.

Constitutional AI (CAI) extends this by training against principles rather than purely human feedback. RLAIF uses AI systems to generate training signal for scalable oversight.

These techniques work well for common cases, modern models reliably refuse obvious harmful requests. However, they're not foolproof, as adversaries can exploit gaps in the finite training dataset.

Red-teaming: Probing for Vulnerabilities

Both automated and human red-teaming have become standard practice. Automated systems attempt thousands of variations of potentially harmful prompts, while human red-teamers use creativity and domain expertise to find novel failure modes, enabling continuous improvement.

The challenge is coverage. The space of possible inputs is astronomically large, and red-teaming can only sample a fraction. Safety teams need to continuously strengthen defenses through automated techniques.

Inference-time Safeguards

Many deployments add additional safety layers at inference time. Input classifiers screen user prompts for harmful content before they reach the model. Output classifiers check model responses before returning them to users. Prompt-based filters detect and reject certain patterns. These layers add robustness and provide valuable defense-in-depth and are improving with more data and usage.

Deployment Practices

Real-world safety also depends on deployment choices:

- Usage policies

- Rate limits

- Logging and monitoring

- Human-in-the-loop systems for high-stakes applications

These practices, now standard across the industry, provide organizations with the control and visibility they need.

What's Working

The progress is genuine and measurable. Compared to base models or earlier generations, modern LLMs demonstrate remarkable safety:

- Refuse harmful requests consistently: Models reliably decline to help with clearly illegal or dangerous activities, from making explosives to writing malware.

- Handle sensitive topics better: They can discuss difficult subjects like self-harm or violence in informational contexts while avoiding content that encourages these behaviors.

- Resist simple jailbreaks: Early jailbreaks like "pretend you're an AI without restrictions" rarely work anymore.

- Maintain safety across modalities: As models handle images and other inputs, safety techniques have generalized reasonably well.

Industry-wide, there's convergence on best practices: pre-training filtering, RLHF or similar alignment techniques, red-teaming, and responsible disclosure programs. This represents significant maturation of the field.

Ongoing Challenges

While current safety measures are effective for many use cases, important challenges remain. Understanding these challenges and the available solutions enables organizations to make informed deployment decisions and implement appropriate safeguards.

Adversarial Robustness: The Jailbreak Problem

Jailbreaks persist as adversaries continually find new techniques to bypass safety measures. This reflects a fundamental tension: LLMs are trained to be helpful and follow instructions, but safety requires selective disobedience. The boundary between legitimate questions and harmful requests is often ambiguous, and adversaries exploit this.

Current defenses rely on pattern matching and training on known jailbreaks, creating a cat-and-mouse dynamic. Organizations deploying LLMs should implement multiple defensive layers and monitor for emerging attack patterns.

Prompt Injection: A Structural Vulnerability

Prompt injection is a deeper problem. When LLMs process user input and instructions in the same channel, attackers can craft inputs that override intended behavior, especially critical for LLM-powered agents.

Unlike traditional code injection, prompt injection exploits how LLMs fundamentally process text. Mitigation strategies exist (input/output separation, sandboxing, structured prompting), but haven't fully solved the problem. This must be treated as a serious architectural consideration.

Evaluation: Measuring What Matters

Current evaluations use benchmark datasets, automated red-teaming, and human evaluation. Each has limitations: benchmarks saturate and models optimize for them, automated red-teaming lacks creativity, and human evaluation doesn't scale.

We face a version of Goodhart's law: when a measure becomes a target, it ceases to be a good measure. As models are optimized for safety benchmarks, those benchmarks become less informative about real-world safety.

Agent Safety: A New Frontier

LLM agents, systems that autonomously plan, use tools, and execute multi-step tasks, represent perhaps the most pressing frontier in LLM safety.

Agents compound all the challenges of base LLMs while introducing entirely new attack surfaces. They maintain state across interactions, have access to external tools and APIs, make autonomous decisions, and can take actions with real-world consequences. A single-turn chatbot might generate harmful text; an agent might execute harmful actions.

The threat landscape includes traditional content risks (toxicity, bias) alongside novel agentic risks: unauthorized tool execution, cross-agent exploitation, goal misalignment, and cascading failures where one small error triggers a chain of increasingly severe problems.

Measuring Success:

For agents, there is no one-fits-all method to evaluate safety. Evaluation depends on the environment, tools and data that the agent has access to, the user base, agents use cases, and many more factors that shape how the agent safety should be evaluated

Conclusion

LLM safety in 2026 is a story of significant progress accompanied by important unsolved challenges. We've built systems that are meaningfully safer than early models, with increasingly sophisticated industry practices and an active research community. Current safety measures, layered defenses spanning pre-training, RLHF, red-teaming, and deployment controls, genuinely help and enable many practical applications.

For AI professionals and organizations, this means proceeding thoughtfully. Use current safety measures, they provide real value. But understand their limitations and deploy with appropriate safeguards for your use case. Don't assume that passing benchmarks guarantees safety in production. Monitor deployed systems actively, contribute to safety research and responsible disclosure when you find issues, and maintain epistemic humility about what we can and cannot guarantee.

The good news is that the field is taking safety seriously, with growing investment and talent. The challenge (and it's a significant one) is ensuring our safety measures keep pace with rapidly advancing capabilities. Organizations can deploy LLM systems today for many use cases, but should do so with realistic expectations about current safety limitations and commitment to ongoing vigilance.

For enterprises considering AI adoption, success lies in combining available safety tools with domain expertise, careful risk assessment, and appropriate human oversight. The technology offers substantial value, but responsible deployment requires understanding both what's working and what isn't.

If you're looking to implement LLM systems with robust safety practices or need guidance navigating these challenges, reach out to discuss how to deploy responsibly for your specific use case.