How We Built Cortex: An AI-Native Approach to Knowledge Management

Problem Statement

As a company specializing in data and AI, every organization we talk to has the same story. Critical documents are scattered across SharePoint, OneDrive, shared drives, and personal devices.

The result?

- People spend hours hunting for information that already exists.

- New hires take months to find their footing.

- Teams duplicate work because they didn't know it had been done before.

- Critical context gets lost when employees leave.

Traditional solutions don't solve this. Enterprise search returns ten blue links and hopes for the best. Folder hierarchies become labyrinths. ChatGPT-style interfaces hallucinate when they don't have the right context.

The fundamental problem is that these tools treat knowledge as static, isolated files. But real organizational knowledge is dynamic. It's interconnected. It evolves. And it needs to find you, not the other way around.

Why Existing Technical Approaches Fail

Most enterprise knowledge systems follow a shallow pipeline: extract text, chunk it arbitrarily, generate embeddings, retrieve against queries. This architecture has fundamental limitations that no amount of prompt engineering can fix.

Embedding-based retrieval is a single-shot operation. It finds similar text, not relevant answers. When a query requires synthesizing information from multiple documents, or when the answer isn't lexically similar to the question, similarity search fails silently.

Metadata is either absent or requires manual curation that never happens at scale.

We needed a fundamentally different architecture.

Our Approach:

We designed Cortex around a core architectural principle: treat documents as structured knowledge objects, not flat text.

This led to a system built on four technical capabilities:

- Multimodal ingestion using vision-language models to interpret document content, including charts, diagrams, and scanned images, and convert it to structured, searchable representations.

- Automated classification and tagging that extracts titles, generates summaries, and assigns categories without user intervention.

- Agentic retrieval that decomposes complex queries, executes iterative searches, and synthesizes answers from multiple source documents.

- A continuously-updated knowledge graph that extracts entities and relationships from documents, enabling traversal and discovery beyond keyword matching.

Each component is designed to work independently but compounds in value when integrated. The following sections detail how each one works.

System Components:

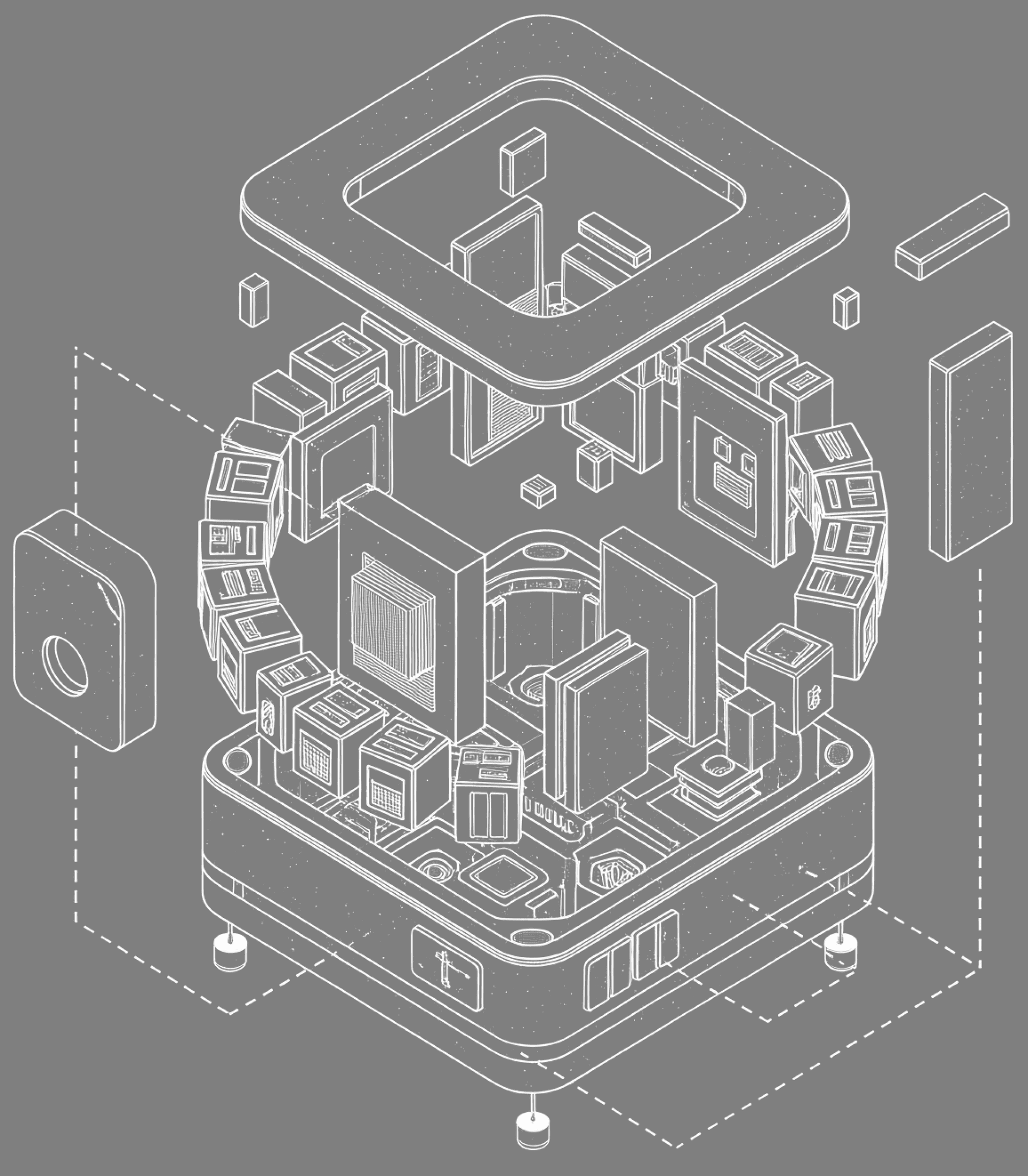

We use a modular monolithic architecture. Requests flow from clients through a REST API layer into three processing modules:

- A Workflow Engine for file processing and parallel AI tasks (classification, summarization, knowledge graph extraction)

- An AI Agents module with specialized agents for search and analysis

- An External Services module for integrations like OCR and antivirus scanning.

All modules share a unified data layer with SQL, vector, and graph databases plus object storage and caching, with tenant isolation enforced across all storage systems.

Features

Intelligent Ingestion

Before you can retrieve knowledge, you have to ingest it properly.

Traditional approaches treat documents as flat text. They run an OCR that is probably not very good with Arabic, extract what they can, and move on. But real documents aren't just words, they're diagrams, flowcharts, screenshots, scanned handwriting, and tables that carry critical meaning.

Most systems ignore this entirely, or extract it so poorly it becomes noise.

When a document enters the system, we use vision-language models to interpret visual content the way a human would.

- A flowchart becomes a described process.

- A table becomes structured information with context.

- A diagram becomes an explanation of what it represents and why it matters.

We also use durable execution to ensure reliable execution of the ingestion pipeline.

So the knowledge locked in that architecture diagram from 2019, the scanned whiteboard photo, the dense financial table, it all becomes searchable, retrievable, and usable.

Automatic Organization

Here's a familiar scenario: someone uploads a document called "Final_v2_REVISED_actual.pdf" into a shared folder with no description, no tags, and no indication of what it contains.

Now multiply that by thousands of documents across years of work. This is how knowledge becomes unfindable, not because it's lost, but because it was never organized to begin with.

Each stage runs as an independent step in the workflow, with its own retry logic and fallback behavior. Classification failures don't block summarization. Low-confidence results are flagged rather than suppressed.

The result is a knowledge base that stays organized by default. Every document is searchable not just by its content, but by what it's about.

Agentic Retrieval

Most retrieval systems work like this: take a query, find the most similar chunks of text, return them. It's fast, but it's shallow.

The problem is that real questions rarely map to a single chunk. "What were the key decisions around the 2023 infrastructure migration?" might require pulling context from a technical report, a project summary, and meeting notes, then synthesizing them into a coherent answer.

Traditional RAG can't do this. It retrieves once, generates once, and hopes for the best.

Our retrieval system works differently. It's agentic. It reasons about the query before retrieving. It breaks down complex questions, searches iteratively, evaluates whether it has enough context, and pulls from multiple sources when needed. If the first pass doesn't surface the right information, it adjusts and tries again.

Think of it less like a search engine and more like a researcher who knows your entire document library. It doesn't just match keywords, it understands what you're actually asking for and works until it finds a complete answer.

That's the difference between search and research.

Living Knowledge Graph

Documents don't exist in isolation. A project proposal references a budget. A technical report builds on a previous analysis. A policy document supersedes an older version. Traditional search can’t see these connections.

The problem is that this web of relationships lives in people's heads. Experienced employees know that the 2022 infrastructure report is relevant to the current migration plan. New hires don't. When that knowledge walks out the door, the connections go with it.

We make these connections explicit and automatic.

As documents are ingested, entities are extracted: people, projects, technologies, concepts. Relationships between them are inferred from context. And crucially, these connections evolve over time as new documents enter the system and old ones become outdated.

The system surfaces connections that humans miss. Ask a question, and the answer might draw from documents you didn't know were related. Browse a project, and see every document, decision, and person associated with it. Without anyone having to curate that view.

Conclusion

We built Cortex to make organizational knowledge actually usable.

The technology finally exists to do this right. to ingest documents intelligently, organize them automatically, retrieve them contextually, and connect them meaningfully. These four pillars work together to change how teams interact with their knowledge.

Information compounds instead of decays. Every document uploaded makes the system smarter, better organized, more connected.

The knowledge base becomes an asset that grows in value over time, not a graveyard of forgotten files.

Answers come with full context, synthesized from multiple sources, with reasoning and citations, not fragments that require manual assembly.

The practical impact: new hires ramp up faster because institutional knowledge is accessible, not locked in senior employees' heads. Teams stop duplicating work because they can find out what's been done before.

And critically, this all respects your organization's boundaries. access control follows your existing hierarchy, enforced at the data layer.

If this resonates, we'd love to show you what it looks like in practice. Reach out for a demo, or follow along as we continue to build.