Axiom Infrastructure: A Day 0 to Day 2 Journey

Who We Are

Entropy builds data and AI products for public and private sectors. Axiom is our AI-native platform: it unifies infrastructure, analytics, ML workflows, and visualization into a single product that adapts to the user. What most organizations piece together from a mess of separate tools, Axiom delivers as one experience.

This is not a deployment guide. It is the reasoning behind our choices, the things we got wrong before we got them right, and the constraints that made certain decisions obvious in hindsight. We structured this around the Day 0 / Day 1 / Day 2 framework:

- Day 0: Strategic design, tool selection, and the "why" behind every foundational choice

- Day 1: Provisioning, deploying, and wiring everything into a working development environment

- Day 2: Operating the system: observability, scaling, and what comes next

Day 0: The Planning Phase

Day 0 is where you make the decisions you will live with for years. For us, it meant asking hard questions about architecture, deployment models, and what kind of system we actually needed before provisioning a single resource.

The Core Constraint

Axiom ships two ways: as SaaS, and as on-premises deployments for clients in regulated sectors (government, banking). That single constraint sits above everything else and shapes most of what follows.

The infrastructure has to be fully reproducible across both delivery models. Whatever runs in our cloud must run identically on a client's hardware. Feature flags handle per-client capability differences, but the underlying platform cannot diverge. If we cannot hand a client the same foundation we run in SaaS, the on-premises offering is built on sand.

That immediately crossed out proprietary managed cloud services (you cannot recreate them inside a client's air-gapped data center) and bespoke deployment scripts (they rot fast, diverge faster, and become a second product to maintain). The only viable path was a deployment model portable enough to run anywhere without changes.

Why Kubernetes

Our ML platform uses Metaflow for pipeline orchestration and MLflow for experiment tracking. A single training run fans out across parallel workers with real CPU and memory demands. Coordinating those workers, managing their resources, and isolating their failures is not something you bolt onto a web server; it needs actual scheduling infrastructure.

Kubernetes solved two separate problems at once. The first is the ML workload itself: a scheduler that handles bursty, resource-intensive jobs alongside steady-state services without manual intervention. The second is deployment parity: same manifests, same Helm charts, same ArgoCD reconciliation whether we are deploying to our cloud cluster or a client's on-premises environment. When a client asks if we can run this in their setup, the answer is yes. The work is configuration, not re-architecture.

Kubernetes is not the right tool for everything. If you are building a web application with a database, the operational complexity is not worth it. If your team does not have anyone who understands networking and container orchestration, Kubernetes will slow you down before it speeds you up. We chose it because we had specific problems that it specifically solves, not because it is what everyone uses.

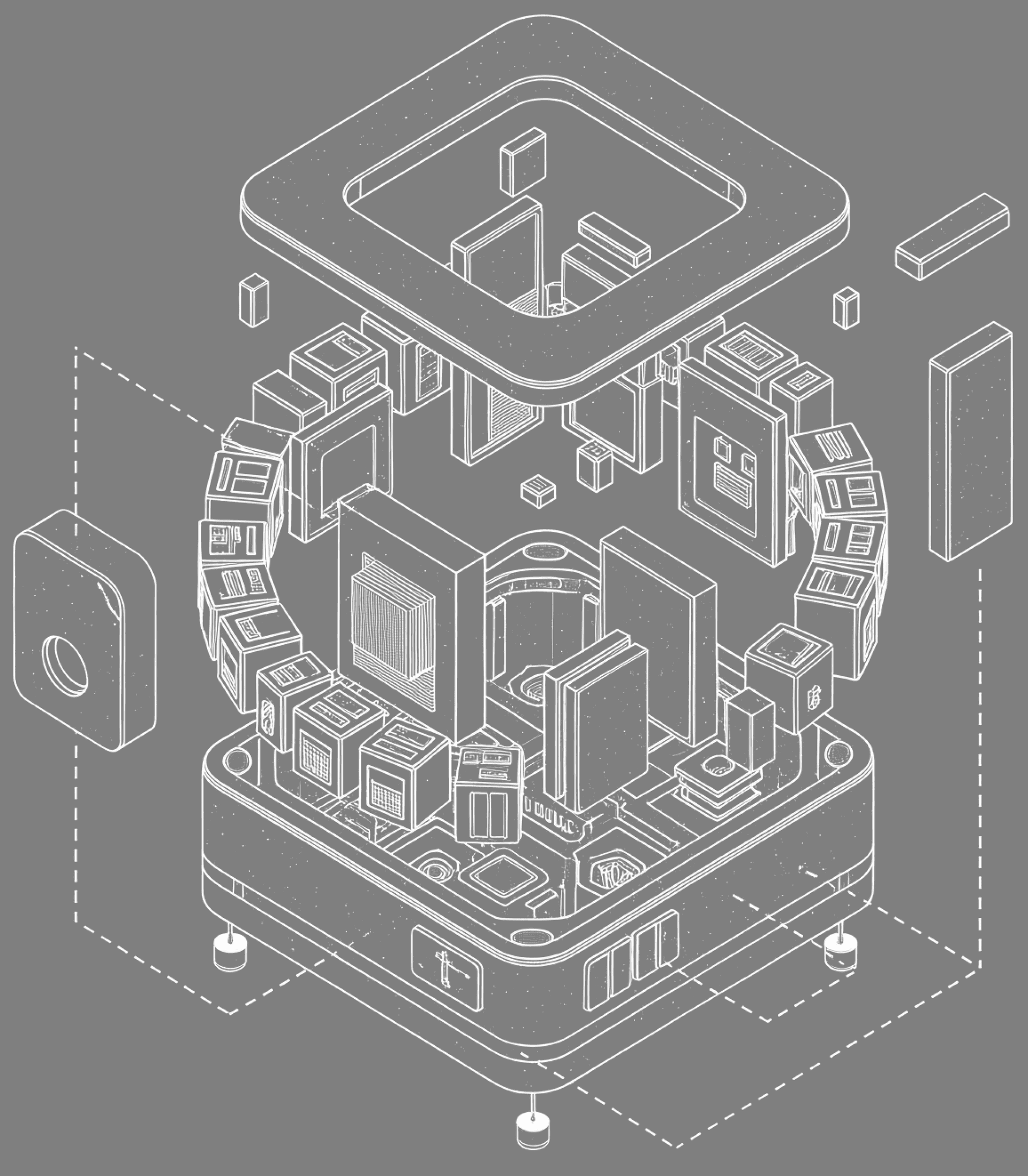

Architecture

We had three realistic options.

Monolithic: a single deployable unit. Simple to start with, but Axiom has too many independent concerns: data processing, ML training, chatbot orchestration, analytics, user management. A monolith would have become a deployment bottleneck; one team's change blocks everyone else's release.

Pure microservices: fully independent services, each with its own repository, deployment, and data store. Elegant in theory, but the operational overhead of maintaining dozens of independent CI/CD pipelines, monitoring stacks, and deployment configurations would have consumed more time than building the actual product. Microservices solve organizational scaling problems; we have a "ship the product" problem.

Modular services with shared code: this is what we chose. Each service lives in its own repository with clear boundaries, but services that share significant code coexist in the same repo.

The backend, for example, has two web servers: the main platform and the developer platform. They serve different user experiences but share models, repositories, and database functions. Splitting them into separate repos would mean extracting shared code into a library, adding a versioning and publishing step, and creating coordination overhead every time a shared component changes. For engineers working on the same codebase daily, that overhead buys nothing. They stay together.

Everything else lives in its own repository. The frontend ships several times a day; the ML trainer ships far less often. Tying them to the same pipeline means one always waits on the other. The trade-off is cross-cutting changes: when a backend API change requires a corresponding frontend update, you coordinate across repositories. For our current structure, the isolation benefit outweighs the coordination cost.

Two Environments

We run development and production. No staging.

The conventional argument for staging is pre-release validation in a production-like environment. The practical reality is that staging clusters are expensive for what they deliver: an environment idle most of the sprint that drifts from production anyway. Configurations diverge, data goes stale, and "it passed in staging" slowly stops meaning anything.

Instead, the development environment runs a stabilization phase at the end of each sprint. Same infrastructure, dedicated namespaces and isolated schemas, accumulated sprint changes validated together as a release candidate. It gives us the isolation of staging without the cost or the maintenance burden of a second cluster.

When we scale (more clients, more engineers, stricter SLAs), we will revisit this. The infrastructure supports adding a staging environment; it is a cluster and a Helm values file away. But we will add it when we need it, not when a best-practices checklist tells us to.

Day 1: The Deployment Phase

Cluster and Network

The development cluster runs inside a private network with no public endpoints. Production is publicly accessible; development is not, because the data in it is representative and our clients operate in sectors where that distinction matters.

Getting developers into a private cluster without a painful access setup took some thought. We looked at the cloud provider's native VPN first. Certificate management and endpoint provisioning are part of any VPN setup regardless; that was not what stopped us. The real reason is coupling: a VPN tied to one cloud provider cannot follow us into a client's data center. Our ability to deploy anywhere depends on not locking our toolchain to any single vendor. Tailscale is one binary and one authentication step, and it works identically across all platforms.

Tailscale uses its own hostname format, which does not work well for predictable internal FQDNs. We run a custom CoreDNS configuration inside the cluster that resolves *.dev.axiom.entropy.sa to the correct services. On top of that, an NGINX API Gateway handles ingress, TLS termination, and backend routing. The result: developers reach internal services through real domain names with real certificates. No kubectl port-forward, no hacky proxying. The cluster being private is invisible at the URL level.

Platform Services

Running stateful services in Kubernetes is where most teams either build something solid or spend months maintaining things that should just work. We run PostgreSQL, Redis, Keycloak, Temporal, MinIO, and others. Early on we had to decide whether to manage these with raw Kubernetes manifests or use operators.

Raw manifests give you full control. They also make you responsible for replication, failover, backup scheduling, storage management, and upgrades for every stateful service you run. For PostgreSQL alone, that is a meaningful engineering investment before you have written a single line of product code.

We use operators where mature ones exist: CloudNativePG for PostgreSQL, Keycloak Operator for auth, an open-source alternative for Redis Enterprise, and many others. They handle the hard parts: we define a custom resource, the operator manages the lifecycle. The tradeoff is that operators are opinionated: when your requirements diverge from their assumptions, you are debugging someone else's controller code instead of your own YAML. They also add CRDs to the cluster that need managing and upgrading. We accept that cost. The line we draw: if an operator is actively maintained and fits well enough, we use it. If it fights us more than it helps, we replace it. We are not ideological about it.

Image Mirroring

Every third-party image gets mirrored to our private container registry before it goes into any manifest.

The motivation came from watching a major Helm chart provider move their public images behind a paid license. Clusters referencing those images started failing pulls. Ours did not, because we own the images we depend on.

Mirroring gives us two things. First, build reproducibility: our images come from infrastructure we control, with no dependency on external registry availability or policy changes. Second, air-gapped compatibility: for clients deploying in isolated environments, external registries are simply unreachable. Mirroring means on-premises deployment is a configuration change, not a dependency resolution problem.

Secret Management

We got this wrong twice before getting it right.

First: .env files checked into Git. Convenient in the short term. Secrets in Git history are permanent regardless of whether you delete the file. Beyond that, this approach assumes secrets are static values, baked in at build time and never changed. Second: Dockerfile ARG and ENV instructions. Felt like an improvement. ARG values are visible in image layers; ENV is visible in container inspection. Anyone with registry access has the secrets. And still no rotation: both approaches had no concept of secrets that need to change.

We now run HashiCorp Vault as the single source of truth for all credentials. Vault generates random passwords that nobody manually sets or stores, maintains full audit logs, and rotates secrets when engineers join or leave. Services get secrets injected as environment variables at deploy time. No code changes required, no new runtime dependencies. The application reads environment variables, as it always did.

For local development, engineers use Lemma, an internal CLI tool. One command, scoped access tokens, full audit trail. No Slack messages asking for database passwords in plain sight. The name makes sense once you remember that in mathematics, a lemma is a helper theorem used to prove something bigger. Axiom is the thing being proven; Lemma does the legwork.

GitOps

The Axiom-GitOps repository is the single source of truth for everything running in the cluster. If it is not in this repository, it should not be in the cluster.

The repository holds a single Helm chart and two value files: values.yaml as a structural template, values-development.yaml updated automatically by CI. ArgoCD watches the repository and continuously reconciles the cluster to match it. Every deployment is a Git commit.

The alternative is push-based CI/CD, where pipelines deploy directly to the cluster. The problem: a manual kubectl apply with no Git record is enough to create drift between what you think is running and what actually is. With GitOps, the repository is the truth by definition. Anything that happened outside of Git gets overwritten on the next reconciliation.

CI/CD

We use GitFlow: feature branches off develop, PRs into develop, promotion to main for releases.

The alternative we considered was trunk-based development: everyone commits to a single main branch. The case for it is strong: fewer merge conflicts, smaller incremental changes, the mainline always deployable. We did not adopt it for two reasons. First, release coordination: we work in sprints and deliver versioned releases to clients. GitFlow gives us a natural boundary where develop accumulates features during a sprint and promotion to main marks a release. Second, CI maturity: trunk-based development demands that every commit to the trunk be production-safe. Our test suite is growing but not yet at that confidence level. The develop branch gives code a place to integrate and stabilize before it gets promoted. When the coverage earns that trust, the branching strategy changes.

The goal across both workflows is a single source of truth: the code in develop, the latest images in the registry, and the application running in the development environment should always be the same thing.

On PR to develop: Flyway migrations run against a live PostgreSQL instance within the CI to catch SQL errors before they touch the development environment; Docker images build and cache their layers to the registry; the test suite runs. CI must pass before the PR is eligible to merge. The cached image layers are important: they prove the code builds successfully and are stored for the next workflow to reuse, so nothing gets rebuilt from scratch.

On merge to develop: a second workflow pulls the already cached images from the PR build, re-tags them with the commit SHA, and pushes them to the registry as the final, deployable images. No rebuilding; the same artifacts that passed CI are the ones that run in the cluster. Why SHAs instead of semantic versions? Because SHAs give us exact traceability: every image maps to exactly one commit. When something breaks, you trace the image tag directly to the code. Rollbacks are a tag revert with no guessing. The merge workflow acts as a gate: only code that has passed review and CI earns a commit-tagged image in the registry.

The same workflow then updates values-development.yaml in the GitOps repository with the new tags. This is the bridge between application CI and infrastructure CD: the commit SHA that was just built is now declared as the desired state. ArgoCD detects the change and deploys.

Database Migrations

Flyway manages all schema changes. Two migration types, two different strategies.

Versioned migrations (V###__*.sql) handle structural changes: tables, columns, indexes. Once applied, they are immutable. You never edit them; you write a new one. Repeatable migrations (R__fn_*.sql, R__trg_*.sql) handle stored functions and triggers. These are edited in place and re-applied whenever Flyway detects a checksum change.

The reasoning: stored functions are code. Code changes. Treating function changes like structural migrations means a new versioned file every time a function evolves, which is noise. Making them repeatable means the file is always the current version, Git tracks the history, and Flyway applies whatever is current.

Rollback strategy: for versioned migrations, we fix forward. A new migration that corrects the issue is more straightforward than trying to reverse structural changes. For repeatable migrations, rollback is simpler: reverting to a previous Git commit restores the previous function definition, and Flyway re-applies it on the next run.

We assign the backend application pods a higher ArgoCD sync wave than the Flyway job. This means Flyway only gates the services that depend on database state. Unrelated services, like Keycloak updates or PostgreSQL operator changes, deploy in their own waves without waiting on migration to complete.

Testing

Tests run against real cluster services: real PostgreSQL, real Keycloak, real Redis. No mocks.

The argument for mocks is speed and isolation. The problem is that mocks test your assumptions about how a service behaves, not whether the service actually behaves that way. A mock database will not surface a type coercion bug in a stored function. A mock Keycloak will not catch a JWT validation failure under a specific realm configuration. You end up with a passing test suite and production bugs that the tests were structurally incapable of catching.

Tests require VPN access to the cluster and are slower than in-memory mocks. We have decided that is worth it.

Each test makes one HTTP request through the full stack and asserts at every layer: HTTP status, database state, external service state. One test covers what would otherwise be split across unit, integration, and end-to-end tests.

We enforce a rule: code must support testing, not the other way around. If a service does not accept its dependencies through constructor injection, it gets refactored before tests are written. Tests never monkey-patch globals or override singletons. This discipline keeps both the codebase and the test suite honest.

Day 2: The Operations Phase

Development is stable. The pipeline runs itself. Focus is shifting from "make it work" to "make it work better."

Scaling

Karpenter handles node provisioning. The cluster runs somewhere between 20 and 30 deployable units: application services, platform services, ML infrastructure, monitoring, internal DNS, and more. ML training jobs spike heavily and unpredictably on top of steady-state workloads. Static node groups either overprovision and waste money or fall short under load. Karpenter provisions nodes when pods cannot be scheduled and removes them when they are no longer needed. For a cluster where "average load" is not a useful concept, dynamic provisioning is the right model.

Observability

To extract uniform telemetry from all services we used OpenTelemetry, an SDK that standardizes the telemetry collected and shipped to our storage backends, this allowed us to decouple instrumentation from the backends themselves meaning we can swap or add backends without touching service code.

For storage we chose Prometheus for metrics, Tempo for traces, and Loki for logs. Loki specifically was chosen over Elasticsearch for its optimized index-on-query approach, rather than indexing everything upfront like Elasticsearch does, Loki only indexes what you actually query for, Elasticsearch is a great tool but its designed for security monitoring and business intelligence not general observability and the overhead shows. Prometheus in our setup does more than just store metrics, it links traces with metrics allowing us to correlate spikes directly to trace events, this means we can hunt down what caused a P99 regression and catch incidents before they fully materialize reducing MTTD significantly.

The best optimization in the entire setup is not a performance one but rather how we handled developer experience, instead of asking every developer to learn the internals of our stack we packaged all observability hooks into a single observability.py that they import directly into their code, allowing telemetry to be directly taken without the developer knowing the exact libraries or implementation we used in our hooks, latency throughput and error rate all get captured automatically alongside traces and logs.

On the deployment side since our stack runs on the same Kubernetes cluster as the services we used the Grafana Labs maintained operators for Loki, Grafana and Tempo and a community maintained one for Prometheus, this kept setup overhead low and gave us a lot of flexibility. The obvious weakness here is co-location, if a cluster level incident occurs the observability stack goes down with everything else which is the worst time to lose visibility, the fix is either migrating the stack to an external server or Grafana Cloud which adds latency, costs and management fatigue to event ingestion but eliminates the risk of infra failures blinding incident response entirely.

One deliberate omission is eBPF based observability, we evaluated it and decided against it, not because its not powerful but because kernel level telemetry is inherently context blind compared to SDK hooks which instrument user land functions and return intelligible app level data, for our use case the tradeoff wasn't worth the technical debt of maintaining kernel level tooling.

What Is Next

The way engineers work is changing, and we are building toward it deliberately.

The current model: an engineer owns a domain, writes the code, writes the tests, reviews the output, and ships. That works, but most of the time in that cycle is implementation, not thinking. Writing a test suite for a feature that follows well-understood patterns, wiring up a new endpoint that mirrors a dozen others, updating configuration to reflect a decision already made somewhere else. These are execution tasks, not design tasks.

We are moving toward a model where that execution layer is handled by AI agents working under an engineer's direction. The engineer defines the feature, sets the constraints, reviews the output. The agent handles the implementation: writing the code, generating the tests, validating against what already exists in the codebase.

We are already partway there. Today we use AI-assisted commands that, given the right architectural context, produce test coverage for a new feature: the engineer specifies what needs to be tested and why, the agent writes the suite following our exact patterns, conventions, and service boundaries. That is not autocomplete. It is a working understanding of the system.

The next steps are more ambitious: agents that write feature code from a specification, run the CI pipeline, watch the deployment, and flag regressions before anyone notices. An engineer who manages a workgroup of agents rather than a ticket queue.

This only works on top of infrastructure that is honest. Declarative deployments give an agent a clear picture of what is running. Real-service testing means the agent's output gets validated against the actual system, not a mock. A clean architectural layering means the agent can write code that fits without inventing its own conventions. The decisions we made in Day 0 and Day 1 were not just about operational stability; they were about building something a machine can reason about.

We are early. But the foundation is there, and the direction is clear.

Closing Thoughts

Every decision in this blog was shaped by the same constraints: a complex product serving sensitive clients, a dual delivery model, and the discipline to not over-engineer.

Kubernetes is not the answer to every problem, but it was the answer to ours. GitOps is not the simplest way to deploy software, but it is the most auditable and reproducible. Running tests against real infrastructure is not the fastest approach, but it is the one that gives us genuine confidence.

If there is one takeaway: infrastructure decisions are not about choosing the best tool. They are about choosing the right tool for your specific constraints, your delivery model, your clients' requirements, and the problems you actually have. Everything else is noise.

Written by the Axiom infrastructure team at Entropy.